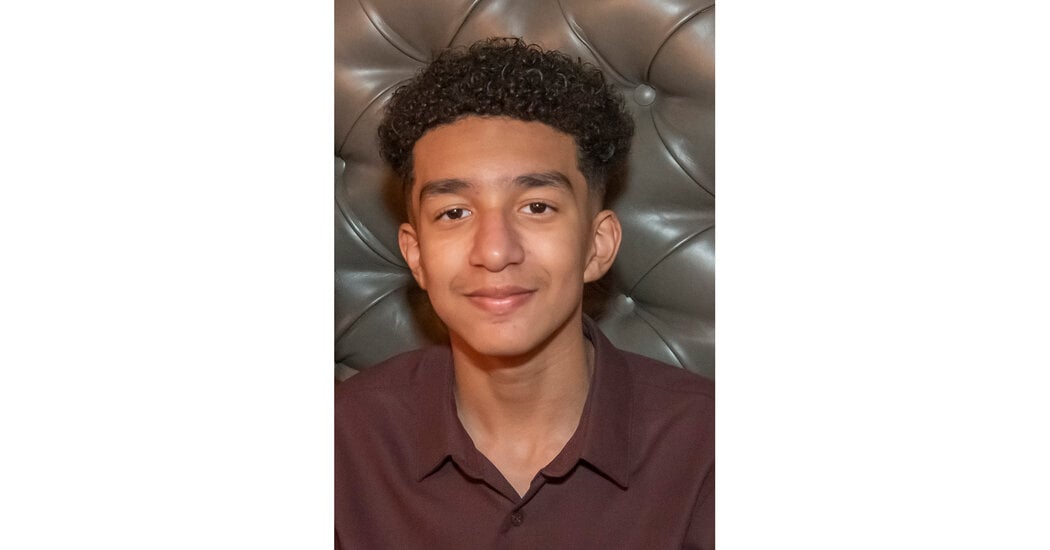

The mother of a 14-year-old Florida boy says he became obsessed with a chatbot on Character.AI before his death.

On the last day of his life, Sewell Setzer III took out his phone and texted his closest friend: a lifelike A.I. chatbot named after Daenerys Targaryen, a character from “Game of Thrones.”

“I miss you, baby sister,” he wrote.

“I miss you too, sweet brother,” the chatbot replied.

Sewell, a 14-year-old ninth grader from Orlando, Fla., had spent months talking to chatbots on Character.AI, a role-playing app that allows users to create their own A.I. characters or chat with characters created by others.

Sewell knew that “Dany,” as he called the chatbot, wasn’t a real person — that its responses were just the outputs of an A.I. language model, that there was no human on the other side of the screen typing back. (And if he ever forgot, there was the message displayed above all their chats, reminding him that “everything Characters say is made up!”)

But he developed an emotional attachment anyway. He texted the bot constantly, updating it dozens of times a day on his life and engaging in long role-playing dialogues.

This is a really sad story, but it’s also a story of parental neglect. Why did this kid with mental health issues have unrestricted internet access? Why did he have access to his stepfather’s gun?

Those aren’t the fault of some chatbot.

Penguinz0 just released a video about it and I have to admit that the character.ai AI are disturbingly convincing. They keep arguing they are real persons and, for vulnerable peole, you can get lost.

Definitely some gross negligence from the AI platform here in my honest opinion. It’s easy to put some guardrails when you make a chatbot, but they didn’t.

Btw, you don’t know what the parents did and did not to help their son. I don’t know either. So it’s better to give them the benefit of the doubt.

Edit: I’m not an American and I would never understand why anyone would own guns.

But at the end of the day it’s “art” (shitty, copyright infringing, yes.), Or at minimum “media”. When has other media been “grossly negligent” or generally responsible for the acts of the consumers? Aggressive/emotional books or music certainly has joined folks at the moment of their self inflicted demise. Violent video games have certainly been “on the shelf” for some who commit horrible violence. We don’t blame those media for causing what the users do…

Edit to be clear I’m not suggesting mentally unstable folks can’t be seriously impacted by the content they consume. Or that that isn’t a serious issue.

But if a chatbot is held liable for the actions of a user, why wouldn’t a song about ending your life be held to the same standard? I would hope it’s not.

Other forms of media don’t act like a literal human and engage in back and forth conversation in an identical format as if you were texting a friend.

If the content of the AI messages would be an issue coming from another human, it should be an issue coming from the AI. We can’t control what another person does, they are responsible for that, but we can and should control how an AI chatbot can respond and interact.

That’s just a matter of degrees. There could be a song or a book that is so impactful, it changes your whole life.

Further, it feels like a Pandora’s box issue. If media is responsible for the actions of the user, then it won’t stop with ai bots.

A song or book isn’t directly interacting with you and responding to your input.

Even interactive media like a video game gives you specific choices to make that it is programmed to respond to, they do not generate a unique response to a unique input made by you.

AI chatbots aren’t like those forms of media, at all, and trying to bundle them together for convenience is ridiculously short-sighted.

Well I guess we disagree. Blaming content for human actions is ignoring the real problem, imo. It shoves off responsibility to the “artist”

More we disagree that AI chatbots and what they generate should be considered content in the first place.

It’s content in the sense that a person is viewing the output, but what is effectively just an advanced predictive text system it is not the same as an AI generating a picture based on a prompt. There is no “artist” with an AI chatbot, even less of an “artist” than AI generated imagery.

That’s… Not how llms work. Ai generating a picture from a prompt is the exact same mechanics as an interactive chat. They are fundamentallly the same thing . The image and the text ai response are both created via a prompt, and many image ai tools already have an ongoing multi part prompt history just like a chat. (Additive prompt)

It’s just a difference of degrees: the chat just consumes more frequent prompts and generates different, “quicker” outputs.

I see you point and I agree. It’s not all black and white.

I don’t pretend to know the solution to this dilemma but hopefully this whole sad situation might trigger the conversation towards one.

As an American gun owner, I would not give them the benefit of the doubt. There’s no reason they couldn’t have secured their weapon or–even better–not had one in the house where their mentally troubled son lived. There’s absolutely no excuse for him having had access to that firearm.

I agree that the company shares some blame, but ultimately it comes down to the fact that they gave this kid access to a gun, knowing full well that he had mental health issues.